Onboarding process with Digital Identity Service API

This guide describes how to migrate the digital onboarding scenario from using the previous Document Server and Core Server to the new Digital Identity Service.

Documentation of the previous server APIs

The old documentation can be accessed here.

Webinar

The presentation of the new API and chacnges to the old one are well explained in our webinar.

Changes in the back end API

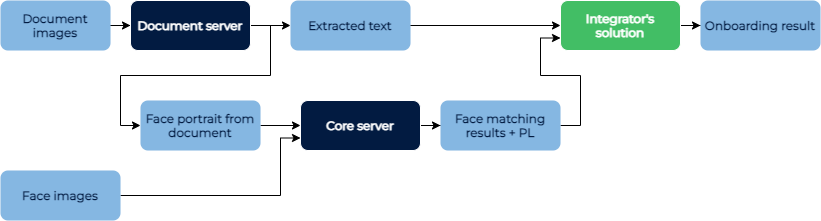

The most significant change is that the new DIS takes over the decision logic. Previously the API returned only extracted data, and the integrator had to store it and handle it. Now the result of the onboarding are the various trust factors of the person that is onboarded, called “customer” in the new API.

Previous way of integration:

New way of integration:

Caching the onboarding data of customer

The main difference in the new API is that it is not stateless. All data related to onboarding are cached under the customer entity. This allows the Digital Imaging Service (DIS) to perform identity verification and trust calculation internally. The integrator’s side gets much simpler, not needing to store results between different steps and reimplementing the verification logic. The cache stores data related to onboarding for the time mentioned in the configuration.

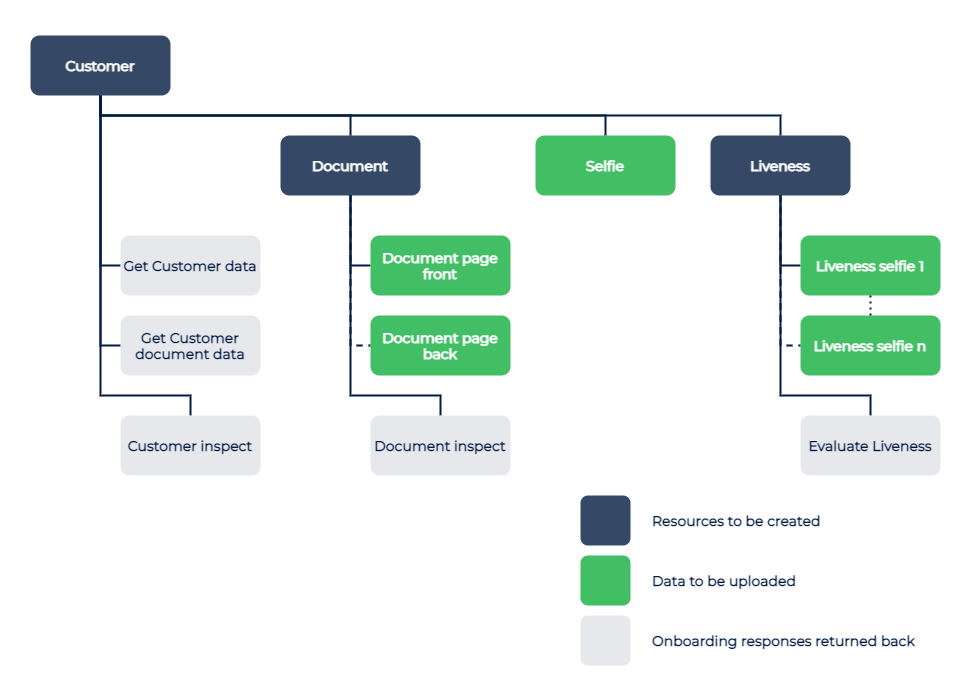

Structure of the onboarding data

Previous API only had document OCR calls and face detection calls with image as input, returning extracted or identified data as a result. Now DIS API uses data structure that is built around the customer person in the onboarding:

Customer

- Contains all the images and data related to a person.

- Customer data can be got once a document photo or selfie is uploaded. These contain information extracted by OCR that are related to the customer.

- The data extracted by OCR that are related to the document are in the Customer Document data.

Document

- Contains the images of one or both sides of document. OCR recognition happens when an image is uploaded. Data extracted from the document are found in the Customer entity.

- Document inspect uses the available data on both images to evaluate the authenticity of the document.

Selfie

- Primary photo of the customer. This is used for comparison with the portrait on the document, as well as estimating age and gender.

Liveness

- Contains images to evaluate liveness of the person. In the case only passive liveness is used, this contains the same photo as Selfie.

- Once the sufficient number of photos is uploaded, the liveness evaluation can run, the type of evaluation needs to be defined in the request.

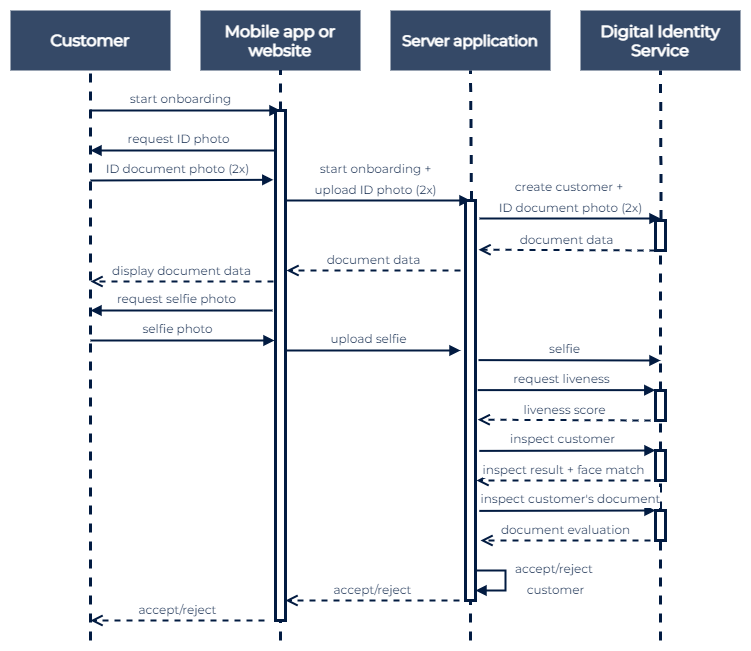

Onboarding process flow

The recommended sequence with onboarding is on the following chart:

Migrating the functions of previous DOT Document Server

The document scanning functionality of the DOT Document Server is available in the new Digital Identity Service API as customers' documents operation.

Document scanning

Before

There was one API call POST /api/v5/document/ocr that returned all the data extracted from the provided document page. The integrator’s side had to keep the data for future use.

After

The entity customer has a sub-entity document. They have to be created consecutively. Then both pages (or one page in the case of a passport) has to be uploaded using PUT /api/v1/customers/{id}/document/pages.

Both pages are processed, and results from them are aggregated. The data from the document can be read with GET /api/v1/customers/{id}. There are separate calls for getting image crops from documents.

Migrating the functions of previous DOT Core server

The face functionality of the DOT Core Server, for cases where the onboarding is not required, can be reproduced by the Face operations of the new Digital Identity Service API. Face functionality is also implemented in the customers' selfie operations of the Digital Identity Service API

Face matching

Before

There were API calls POST /api/v6/face/detect and /api/v6/face/verify to detect a face from a picture and to compare two faces. The integrator’s side had to implement the logic and use an extracted face

After

The entity customer has a sub-entity selfie, where the customer’s selfie has to be uploaded. As the document photos have been uploaded previously, the DIS can match the face